Product Vision

LLM Powered No-code or Low-code Autonomous Agents

Building a transformative no-code/low-code AI Agent service that empowers users to create, deploy, and manage intelligent agents seamlessly.

Introduction

We are embarking on a transformative journey to build a no-code/low-code AI Agent service that empowers users of all technical backgrounds to create, deploy, and manage intelligent agents seamlessly. This service is designed to democratize AI agent development by allowing users to define their systems layer by layer using predefined templates and user-friendly interfaces. From defining individual tools to multi-agent collaboration, the service ensures flexibility, scalability, and ease of use.

At the core, this service will:

- Provide a modular, template-driven approach for building AI tools and workflows.

- Enable the creation of complex agent-driven applications through visual design and customizable logic flows.

- Support multi-agents systems with a variety of communication patterns, facilitating rich, dynamic interactions between agents.

Agent System Overview

In a LLM-powered autonomous agent system, LLM functions as the agent's brain, complemented by several key components:

Planning

- Subgoal and decomposition: The agent breaks down large tasks into smaller, manageable subgoals, enabling efficient handling of complex tasks.

- Reflection and refinement: The agent can do self-criticism and self-reflection over past actions, learn from mistakes and refine them for future steps, thereby improving the quality of final results.

Memory

- Short-term memory: All of the in-context learning can be considered as utilizing short-term memory of the model to learn.

- Long-term memory: This provides the agent with the capability to retain and recall (infinite) information over extended periods, often by leveraging an external vector store and fast retrieval.

Tool use

The agent learns to call external APIs for extra information that is missing from the model weights (often hard to change after pre-training), including current information, code execution capability, access to proprietary information sources and more.

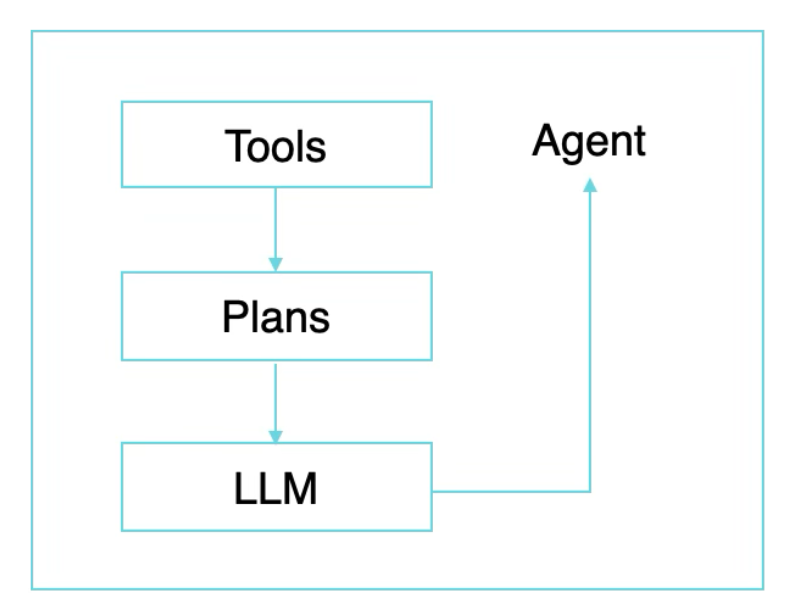

Layer-by-Layer Approach

Our service should enable users to construct AI agents by defining and integrating various components layer by layer. Allowing for modularity and flexibility in agent design. The key layers include:

- Tool: Units that perform specific tasks.

- Plan: Logic that drives agent behavior.

- LLM Integration: Leveraging Large Language Models via API endpoints.

- Agent: Entities that utilize tools and plan to perform complex tasks.

- Multi-Agents Collaboration: Systems where agents communicate and collaborate to achieve shared goals.

1. Tool Creation

Tools are the fundamental building blocks of the system. Users will start by defining tools using a predefined template. These tools could be:

- •API-based: Users can define tools based on an API endpoint template, specifying the API's URL, input/output parameters, and a description of what the tool does.

- •Code-based: Alternatively, users can define tools by writing Python code using the provided template. The template includes fields for specifying function names, input/output parameters, and additional configurations.

Each tool will be given:

- Name: A clear identifier of the tool.

- Description: A detailed explanation of what the tool does.

- Parameters: Inputs and outputs of the tool, specified by the user.

2. Plan Creation

The planning layer defines the logic that drives agent behavior.

Available Workflows:

- ReAct: Combines reasoning and acting, allowing agents to reason before acting in a situation.

- LLM Compiler: Optimizes large language model performance by compiling and executing a logical sequence based on user queries.

- StateFlow: State-based workflows where agents move between predefined states based on conditions.

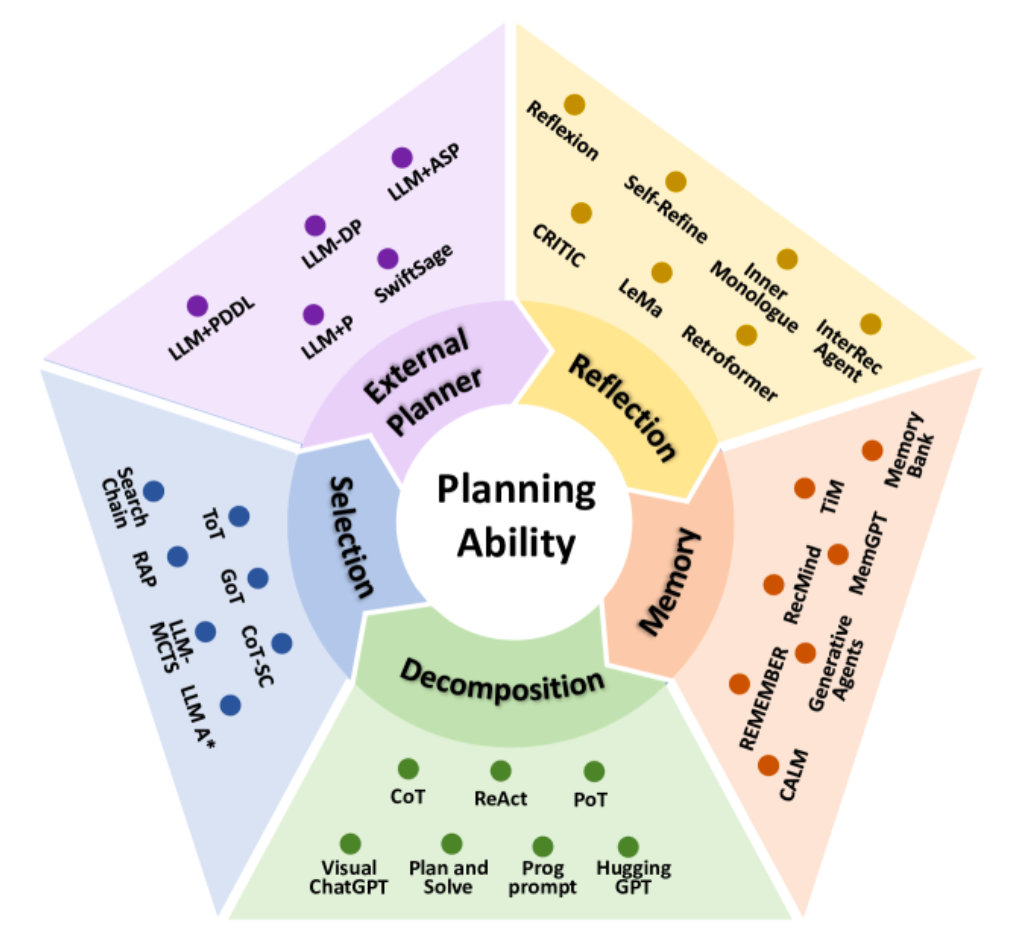

Here is more comprehensive overview of the planning abilities:

Task Decomposition

This method involves breaking down complex tasks into smaller, more manageable subtasks. By decomposing a task, the agent can plan for each subtask individually, simplifying the overall planning process. This is akin to a divide-and-conquer strategy.

Multi-Plan Selection

In this approach, the agent generates multiple potential plans for a given task and then selects the most appropriate one using search algorithms, such as tree search methods. This encourages the agent to consider various strategies before committing to a specific plan, enhancing decision-making by evaluating alternative options.

External Planner-Aided Planning

Here, the planning process is enhanced by incorporating an external planner module. The LLM formalizes the task and provides necessary information to the external planner, which then generates efficient and feasible plans. This addresses issues like computational efficiency and plan feasibility that might be challenging for the LLM alone.

Reflection and Refinement

This methodology focuses on iterative improvement. After generating an initial plan, the agent reflects on any failures or inefficiencies and refines the plan accordingly. This reflective process allows the agent to learn from mistakes and continuously improve its planning ability by updating its approach based on feedback.

Memory-Augmented Planning

In this approach, the agent utilizes an external memory module that stores valuable information such as commonsense knowledge, past experiences, or domain-specific data. During planning, the agent retrieves relevant information from this memory to inform and enhance its decision-making process, leading to more informed and effective plans.

3. LLM Creation

Large language models (LLMs) will be integrated into the service to enhance agent capabilities. Users can define LLMs through an API endpoint, selecting models based on their use case, such as:

- Conversational agents using models like GPT.

- Code generation agents fine-tuned for programming.

- Domain-specific models for specialized use cases (e.g., legal, medical).

Predefined API endpoints will enable users to call LLMs and use them within their workflows to enhance agent performance.

4. Agent Creation

Once tools and plans are defined, users can build agents by assembling these components. Agents will be defined using:

- Tools: Select which tools the agent has access to.

- Plan: Define the plan that dictates the agent's logic and behavior.

- LLM Models: Attach predefined LLMs to augment the agent's capabilities.

Types of agents users can create:

- •Task Execution Agents: Agents designed to complete specific tasks such as customer support, data analysis, or process automation.

- •Decision-Making Agents: Agents that evaluate multiple inputs and make decisions based on data, predefined rules, or LLM-driven analysis.

- •Exploratory Agents: Agents that autonomously explore options, iterate through solutions, and optimize towards objectives.

5. Multi-Agents Collaboration

Pre-defined communication pattern

Collaboration between agents is essential for solving complex, multi-step problems. The service allows users to define multi-agent systems where agents communicate, share information, and coordinate actions.

The Agent Communication Structure

The communication between agents in Large Language Model-Multi-Agent (LLM-MA) systems is crucial for supporting collective intelligence. Agent communication can be analyzed from the following perspectives:

Communication Paradigms

This refers to the styles and methods through which agents interact. The primary paradigms include:

Cooperative Paradigm

Agents collaborate towards a common goal, sharing information to enhance a collective solution.

Debate Paradigm

Agents engage in argumentative exchanges, presenting and defending their viewpoints while critiquing others. This is effective for reaching consensus or refining solutions.

Competitive Paradigm

Agents pursue their individual goals, which may conflict with those of other agents.

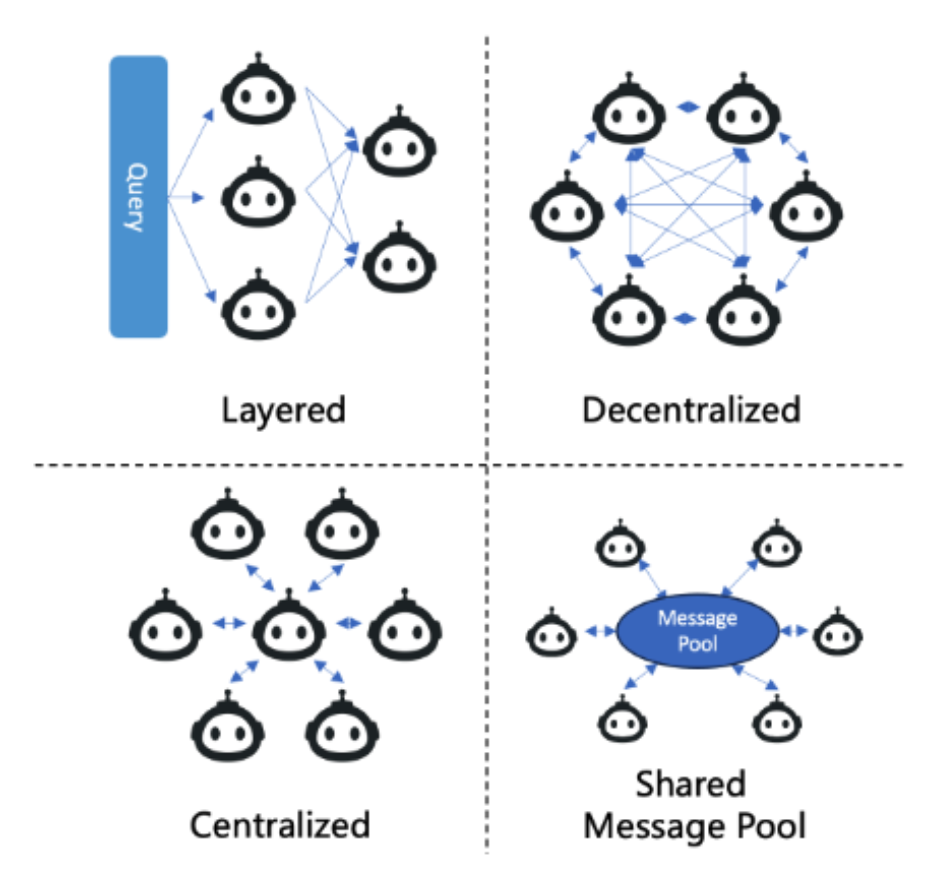

Communication Structures

This defines how communication networks are organized within the multi-agent system. Four typical structures are:

Layered Communication

- Hierarchical Organization: Agents are structured in layers with specific roles.

- Interactions: Primarily occur within the same layer or with adjacent layers.

- Example: DyLAN introduced by Liu et al. (2023), which uses a multi-layered feed-forward Dynamic LLM-Agent Network to facilitate dynamic interactions and improve cooperative efficiency.

Decentralized Communication

- Peer-to-Peer Network: Agents communicate directly with each other without a central coordinator.

- Usage: Common in world simulation applications where flexibility and autonomy are important.

Centralized Communication

- Central Coordinator: A central agent or a group of agents manages the communication.

- Interactions: Other agents communicate primarily through this central node.

Shared Message Pool

- Shared Repository: Agents publish messages to a common pool and subscribe to messages relevant to them.

- Benefit: Enhances communication efficiency by organizing messages based on agent profiles.

- Example: MetaGPT proposed by Hong et al. (2023), which implements this structure to boost efficiency.

Pre-defined Workflow

This can be done using a drag-and-drop interface where users can visually construct workflows that will be converted into JSON for backend processing. Workflow can include tools and agents.

Workflow will be configurable with:

- Conditional branches.

- Looping and iteration logic.

- Parallel execution of multiple workflows.

Future Extensions

Expanding AI Logic Models

- •Autonomous Exploration: Agents capable of navigating and exploring unknown environments, powered by reinforcement learning algorithms.

- •Meta-Learning: Agents that adapt their logic over time by learning from their experiences and interactions.

By providing a no-code/low-code platform, we aim to make AI agent development accessible to everyone—from technical developers to business users—enabling rapid innovation and democratizing the power of intelligent automation.